[INFO] Development Journal

Re: [INFO] Development Journal

yeah, I wish you more time  (and maybe in break time you can do something more with SDK :p ). milion people are waiting for it. xD

(and maybe in break time you can do something more with SDK :p ). milion people are waiting for it. xD

- Dr.Flay

- Godlike

- Posts: 3348

- Joined: Thu Aug 04, 2011 9:26 pm

- Personal rank: Chaos Evangelist

- Location: Kernow, UK

- Contact:

Re: [INFO] Development Journal

Can someone remove all Shadow's metal that is slower than 170 BPM

ChaosUT https://chaoticdreams.org

Your Unreal resources: https://yourunreal.wordpress.com

The UT99/UnReal Directory: https://forumdirectory.freeforums.org

Find me on Steam and GoG

-

Torax

- Adept

- Posts: 406

- Joined: Fri Apr 20, 2012 6:38 pm

- Personal rank: Master of Coop

- Location: Odessa, Ukraine

Re: [INFO] Development Journal

The lastest news.

UT SDK is not dead. As maybe somebody thought.

Yesterday i supported Shadow with very important source code that may add a very large possibilities to the graphics and performance. He even told about implementing of GLSL technology. As i understand from his words, it will be possible to use bump mapping, use detail textures not only at level geometry, use shaders, high poly stuff and much more. So he returns to the work.

As for me, i suspend most of my work and focus on development of SDK. Only one thing i should do - start my project about remodelling UT99 weapons and related to them stuff. I will use default code to script them (only maybe add some features about optimization and small changes that would not have big effect on gameplay and possibilities). And also, i will try to make new models as closely fit with original ones, as i can, to save visuals and feeling as original weapons have. And except this i will help Shadow with coding and testing.

We're again at work.

New release is not as far as it was before.

UT SDK is not dead. As maybe somebody thought.

Yesterday i supported Shadow with very important source code that may add a very large possibilities to the graphics and performance. He even told about implementing of GLSL technology. As i understand from his words, it will be possible to use bump mapping, use detail textures not only at level geometry, use shaders, high poly stuff and much more. So he returns to the work.

As for me, i suspend most of my work and focus on development of SDK. Only one thing i should do - start my project about remodelling UT99 weapons and related to them stuff. I will use default code to script them (only maybe add some features about optimization and small changes that would not have big effect on gameplay and possibilities). And also, i will try to make new models as closely fit with original ones, as i can, to save visuals and feeling as original weapons have. And except this i will help Shadow with coding and testing.

We're again at work.

New release is not as far as it was before.

Unreal. Alter your reality..forever...

Re: [INFO] Development Journal

I love to 'hear' that  simply amazing news everyday on these forums

simply amazing news everyday on these forums  good luck with making this stuff working. Cheers

good luck with making this stuff working. Cheers

- Feralidragon

- Godlike

- Posts: 5493

- Joined: Wed Feb 27, 2008 6:24 pm

- Personal rank: Work In Progress

- Location: Liandri

Re: [INFO] Development Journal

Some words: (but first a disclaimer: I am still new at this C++ and OpenGL/DirectX stuff, so any crass mistake or nonsense spouted here, please point it out with the degree of severity you find proper)

- Although I didn't say anything before, some time ago I tried the SDK itself as a "product" (loading in UEd and all) and there's something in particular that disappointed me to a good extent.

With all the deal around static meshes, I opened the test maps thinking "cool, finally I will see some static mesh usage"... but instead, all I saw was BSP, that was my disappointment number 1.

Later, I saw that there was a sandbox package with some static mesh samples, I added them to the map to check them out, and once in game I had my disappointment number 2: the static meshes brought the game to its knees in terms of performance. I didn't even need to look at them for the massive lag to take place. First I thought it was the dynamic lighting (since there was a heavy usage of it), but no, it was the static mesh...

Not only that, if you preview stuff in UEd, there's probably a memory leak somewhere since it get unusable after a few seconds of realtime preview in at least one of the viewports, but that's a separate issue.

Now, why am I only bringing this now? Instead of just giving a bad insight about them and being the typical whining fella every developer hates, since they obviously took some work already and aren't developed for production usage yet, and since the performance issue was perhaps the main reason why you added LOD levels to them from the start, I decided to take a look at how you were doing things.

The first thing that jumped into sight is the fact that you are committing the exact same mistake Epic did with the render of meshes: you're painfully and slowly rendering 1 single tri/poly at a time and make a draw call for them. In other words, just like in any other normal mesh, for each poly there an entire long and slow call to the GPU, which explains a lot the performance issues of normal meshes (along with the benchmarking I requested some time ago), and explains to some extent your performance issues as well.

The biggest problem with doing things that way is not even the massive overhead of doing calls like those in the CPU->GPU communication, but the fact that in this way you're literally forcing the GPU to just use 1 single core to render a poly, and thus due to the single threaded nature of the engine, the entire scene get's rendered in 1 poor GPU core doing most of the heavy work (except perhaps the textures and other graphical things which usually are still able to take advantage of the cores), which is particularly important when you have several dozens to hundreds nowadays in modern GPUs which may be able to parallelize by themselves the work of rendering an entire complex 3D model.

Now, I have been making experiments with the render and render devices, both in OpenGL and Direct3D (D3D9), and working out how things work both in the engine as in the device side (as well logging each function to "debug" it to see what exactly it was doing every frame), and at the same time, I have been reading sites and documents on the best ways to render complex objects and one of the obvious things that jumps to the eye is: VBO (Vertex Buffer Objects), which in DirectX is just VB (same meaning, without "object").

I am pretty sure that you know what a VBO is, but for the sake of someone else following this discussion, a VBO is an object composed by vertex data that is stored directly in the GPU RAM, and is able to work fully independently from the CPU. Basically, you load all the vertexes of a model/mesh along its information (UVs, textures, colors, blending), store it once and statically* (generally speaking, it doesn't have to be that way, it has 3 modes) in the GPU RAM and give it a UID, and whenever you want to render that model, instead of making a call of each and every single poly, texture assignment and whatnot, you just make a single call to the GPU with the UID of the VBO it should render.

The difference in performance would probably be severe, as from there the performance of a model render would be entirely dependent on the GPU itself, which is probably close to what the UEngine2 does, plus the exactly same thing is supported in DirectX, and it actually seems more straightforward as it makes some "magic" that OpenGL does not.

Having that said, probably you should think in reworking the static meshes to be fully rendered through VBOs (if you didn't already).

Also, I am going to do my own experiments with VBOs probably during the this next weekend so I can check if it works as planned and if it performs really as well as I think it will. And I am sharing this already to check what you have to say about it, if you already knew about it or not and if there's something obvious that I am not considering so far.

If you didn't, or didn't plan to, in case I succeed in my testing and implementation of it, I can of course share the code and necessary calls for UT in specific (I generally don't hide my sources anyway).

Also, 2 questions that are bugging me ever since I started to look into this if you may:

1 - Do you know if there's a way to intercept directly the render of an actor (before DrawGouraud calls are made and such)? From what I can tell in your implementation, you didn't even try to override, you just extended it to render the new stuff in your own way, and let the old stuff render in its own way.

2 - Do you know where exactly (in the render device, like OpenGL) the sprites are rendered? I mean, meshes and BSP are obvious, as well as the Canvas calls, but for the life of me I am not able to figure out where or how the sprites are being rendered at all in the engine (at first I thought they would be gouraud polys as well facing the viewport at all times, but they aren't, at least from that function).

- Although I didn't say anything before, some time ago I tried the SDK itself as a "product" (loading in UEd and all) and there's something in particular that disappointed me to a good extent.

With all the deal around static meshes, I opened the test maps thinking "cool, finally I will see some static mesh usage"... but instead, all I saw was BSP, that was my disappointment number 1.

Later, I saw that there was a sandbox package with some static mesh samples, I added them to the map to check them out, and once in game I had my disappointment number 2: the static meshes brought the game to its knees in terms of performance. I didn't even need to look at them for the massive lag to take place. First I thought it was the dynamic lighting (since there was a heavy usage of it), but no, it was the static mesh...

Not only that, if you preview stuff in UEd, there's probably a memory leak somewhere since it get unusable after a few seconds of realtime preview in at least one of the viewports, but that's a separate issue.

Now, why am I only bringing this now? Instead of just giving a bad insight about them and being the typical whining fella every developer hates, since they obviously took some work already and aren't developed for production usage yet, and since the performance issue was perhaps the main reason why you added LOD levels to them from the start, I decided to take a look at how you were doing things.

The first thing that jumped into sight is the fact that you are committing the exact same mistake Epic did with the render of meshes: you're painfully and slowly rendering 1 single tri/poly at a time and make a draw call for them. In other words, just like in any other normal mesh, for each poly there an entire long and slow call to the GPU, which explains a lot the performance issues of normal meshes (along with the benchmarking I requested some time ago), and explains to some extent your performance issues as well.

The biggest problem with doing things that way is not even the massive overhead of doing calls like those in the CPU->GPU communication, but the fact that in this way you're literally forcing the GPU to just use 1 single core to render a poly, and thus due to the single threaded nature of the engine, the entire scene get's rendered in 1 poor GPU core doing most of the heavy work (except perhaps the textures and other graphical things which usually are still able to take advantage of the cores), which is particularly important when you have several dozens to hundreds nowadays in modern GPUs which may be able to parallelize by themselves the work of rendering an entire complex 3D model.

Now, I have been making experiments with the render and render devices, both in OpenGL and Direct3D (D3D9), and working out how things work both in the engine as in the device side (as well logging each function to "debug" it to see what exactly it was doing every frame), and at the same time, I have been reading sites and documents on the best ways to render complex objects and one of the obvious things that jumps to the eye is: VBO (Vertex Buffer Objects), which in DirectX is just VB (same meaning, without "object").

I am pretty sure that you know what a VBO is, but for the sake of someone else following this discussion, a VBO is an object composed by vertex data that is stored directly in the GPU RAM, and is able to work fully independently from the CPU. Basically, you load all the vertexes of a model/mesh along its information (UVs, textures, colors, blending), store it once and statically* (generally speaking, it doesn't have to be that way, it has 3 modes) in the GPU RAM and give it a UID, and whenever you want to render that model, instead of making a call of each and every single poly, texture assignment and whatnot, you just make a single call to the GPU with the UID of the VBO it should render.

The difference in performance would probably be severe, as from there the performance of a model render would be entirely dependent on the GPU itself, which is probably close to what the UEngine2 does, plus the exactly same thing is supported in DirectX, and it actually seems more straightforward as it makes some "magic" that OpenGL does not.

Having that said, probably you should think in reworking the static meshes to be fully rendered through VBOs (if you didn't already).

Also, I am going to do my own experiments with VBOs probably during the this next weekend so I can check if it works as planned and if it performs really as well as I think it will. And I am sharing this already to check what you have to say about it, if you already knew about it or not and if there's something obvious that I am not considering so far.

If you didn't, or didn't plan to, in case I succeed in my testing and implementation of it, I can of course share the code and necessary calls for UT in specific (I generally don't hide my sources anyway).

Also, 2 questions that are bugging me ever since I started to look into this if you may:

1 - Do you know if there's a way to intercept directly the render of an actor (before DrawGouraud calls are made and such)? From what I can tell in your implementation, you didn't even try to override, you just extended it to render the new stuff in your own way, and let the old stuff render in its own way.

2 - Do you know where exactly (in the render device, like OpenGL) the sprites are rendered? I mean, meshes and BSP are obvious, as well as the Canvas calls, but for the life of me I am not able to figure out where or how the sprites are being rendered at all in the engine (at first I thought they would be gouraud polys as well facing the viewport at all times, but they aren't, at least from that function).

- Shadow

- Masterful

- Posts: 743

- Joined: Tue Jan 29, 2008 12:00 am

- Personal rank: Mad Carpenter

- Location: Germany

- Contact:

Re: [INFO] Development Journal

Yes I already considered VBOs. Most of the memory/performance leaks aren't present in the current build now because I had similar problems too, especially in wireframe view it was horrible. But even though these improvements are quite a leap forward implement VBOs would a more then decent way to accomplish real static meshes.

Question 1 - Yes I know where and when, and I'm doing it currently by exchanging drawmesh functions with my own version so ALL actors benefit from better possibilities without the need of an additional actor (like staticmeshactor). Yes I didn't try before, because it wasn't necessary yet. I have that improved sprite system, the new meshes, the particle engine etc. I'm using as less actors as possible and if I needed a sub render interface (directly calling a group of actors from render) I simply coded it.. for the systems described previously.

Question 2 - Yes, I know where and when. They're rendered together with meshes in URender::DrawActor / URender::DrawActorSprite.

Question 1 - Yes I know where and when, and I'm doing it currently by exchanging drawmesh functions with my own version so ALL actors benefit from better possibilities without the need of an additional actor (like staticmeshactor). Yes I didn't try before, because it wasn't necessary yet. I have that improved sprite system, the new meshes, the particle engine etc. I'm using as less actors as possible and if I needed a sub render interface (directly calling a group of actors from render) I simply coded it.. for the systems described previously.

Question 2 - Yes, I know where and when. They're rendered together with meshes in URender::DrawActor / URender::DrawActorSprite.

Re: [INFO] Development Journal

This would be the first major step into using the SDK at all times, even online.Shadow wrote:...so ALL actors benefit from better possibilities...

It'd take a few adjustments to the ACE so that it recognize the new 'Traces' and 'Render' functions to prevent SDK based cheats.

EDIT:

Btw, if you're implementing a new DrawActor based routine, remember to avoid crashing the engine when rendering actors with bParticles=True and no Texture value on them (epic never fixed this).

- Shadow

- Masterful

- Posts: 743

- Joined: Tue Jan 29, 2008 12:00 am

- Personal rank: Mad Carpenter

- Location: Germany

- Contact:

Re: [INFO] Development Journal

Yeah you simply have to look in the source then.. the new trace functions aren't that complicated they just call the old ones and add the routines needed for the volumes.. that's all. The more tricky part is when it comes to implementing them in the physics of the pawns..

btw: cool tip on that dude! Will include your suggestion, so that it simply uses the default texture if there's no texture used

btw: cool tip on that dude! Will include your suggestion, so that it simply uses the default texture if there's no texture used

- Feralidragon

- Godlike

- Posts: 5493

- Joined: Wed Feb 27, 2008 6:24 pm

- Personal rank: Work In Progress

- Location: Liandri

Re: [INFO] Development Journal

Thanks for the info.

Although I tried to log DrawActor in my own render, and it didn't log anything at all (despite actors getting rendered), but I also noticed now that unlike you I wasn't including the RenderPrivate.h anywhere and all the calls are listed there after all, which btw leads me to another question: I am using the Render.lib that you included in your SDK source so I could bind my render, however it's not included in the public headers as far as I could tell, and not even in the surreal repository at github, so... how did you get both the RenderPrivate.h and Render.lib?

EDIT: Sorry, but just one more question: given the RenderPrivate.h... I tried to extend from URender but it doesn't even compile since it's not able to see the methods declared in URender (which is logical, since all the methods seem to be non-virtual and the class is static). So probably the approach is to extend from the URenderBase (which has virtual methods), and load the default Render like you did and then call its functions (acting like an interface), but then DrawActor and DrawActorSprite do not get called since URender itself calls them.

So, when you say you're switching the DrawMesh calls, which happen only in URender (afaik), how are you being able to intercept them?

Although I tried to log DrawActor in my own render, and it didn't log anything at all (despite actors getting rendered), but I also noticed now that unlike you I wasn't including the RenderPrivate.h anywhere and all the calls are listed there after all, which btw leads me to another question: I am using the Render.lib that you included in your SDK source so I could bind my render, however it's not included in the public headers as far as I could tell, and not even in the surreal repository at github, so... how did you get both the RenderPrivate.h and Render.lib?

EDIT: Sorry, but just one more question: given the RenderPrivate.h... I tried to extend from URender but it doesn't even compile since it's not able to see the methods declared in URender (which is logical, since all the methods seem to be non-virtual and the class is static). So probably the approach is to extend from the URenderBase (which has virtual methods), and load the default Render like you did and then call its functions (acting like an interface), but then DrawActor and DrawActorSprite do not get called since URender itself calls them.

So, when you say you're switching the DrawMesh calls, which happen only in URender (afaik), how are you being able to intercept them?

- Shadow

- Masterful

- Posts: 743

- Joined: Tue Jan 29, 2008 12:00 am

- Personal rank: Mad Carpenter

- Location: Germany

- Contact:

Re: [INFO] Development Journal

Hm dunno they were in the modified headers which were around during the need for improved headers (to work with modern x64 / x86 windows platforms), it could also be that I found them in one of the other native mods most certainly dots' onesFeralidragon wrote:Thanks for the info.

Although I tried to log DrawActor in my own render, and it didn't log anything at all (despite actors getting rendered), but I also noticed now that unlike you I wasn't including the RenderPrivate.h anywhere and all the calls are listed there after all, which btw leads me to another question: I am using the Render.lib that you included in your SDK source so I could bind my render, however it's not included in the public headers as far as I could tell, and not even in the surreal repository at github, so... how did you get both the RenderPrivate.h and Render.lib?

EDIT: Sorry, but just one more question: given the RenderPrivate.h... I tried to extend from URender but it doesn't even compile since it's not able to see the methods declared in URender (which is logical, since all the methods seem to be non-virtual and the class is static). So probably the approach is to extend from the URenderBase (which has virtual methods), and load the default Render like you did and then call its functions (acting like an interface), but then DrawActor and DrawActorSprite do not get called since URender itself calls them.

So, when you say you're switching the DrawMesh calls, which happen only in URender (afaik), how are you being able to intercept them?

Yes that's a major problem if you want to code your own base render

Well YES and NO. I use the "old" standard functions of the standard render.. so I won't have to rewrite them as some of them can't be extended or called because some are abstracts (and you can't subclass URender!! -.-) , but my Render is set as the main render (in the ini files) so ALL calls go first on my render and then back on the standard render (which is URender again, if needed). I have yet to check ALL functions if they really need that procedure or if I can simply left them out.

- Feralidragon

- Godlike

- Posts: 5493

- Joined: Wed Feb 27, 2008 6:24 pm

- Personal rank: Work In Progress

- Location: Liandri

Re: [INFO] Development Journal

Thanks for the info, although in the meanwhile I went past that phase already.

Extending from URenderBase was my first approach (the same as yours, in fact), then I tried to extend from URender (to intercept the calls). Although I did it successfully to an extent at a certain point (after: fixing the RenderPrivate.h, manually generating a new Render.lib file from the Render.dll and finally adding a few bytes (byte array member) to my render so the class size matched that of URender to pass through its first assert without crashing), given that the function calls were still the way you describe and the calls were still made in URender, my version ended up working pretty much like an extended URenderBase in the end, in other words, useless lol

So I figured that this wasn't certainly the way to go, even more so since if I managed to successfully somewhat hack a bit further into it and hook into the function calls to make certain things possible, I would probably end up being in trouble with Epic lol, so it was definitely a no-no situation.

Thus I went back to the first approach (the same as yours once again), and currently I am doing the same: extending from URenderBase, having my render as the main one, load the standard one and forward the function calls to it. From there, I have the whole thing structured in my own way.

And I won't try to intercept the render calls of the meshes directly, I will just add a new way to render them instead (an extension if you will), although that way a few things are going to be tricky (mostly for online play, due to the way some vars are defined for replication), but is probably better this way (at least for me).

But I am still doing small baby steps though, so it will take a while.

I already managed to render a mesh in wireframe in my own way in OpenGL though (which extends my own custom render device with custom calls), with the right rotation, proportions and everything, mostly to check if I was in the right direction in my interpretation of the mesh data (I had a problem in the beginning though: it crashed UEd, then I figured that UEd is really "lazy" in loading "lazy arrays" lol, so after forcing the load up of certain things for testing, problems fixed and I am able now to see it in UEd as well), and currently I am in the process of trying to render it as a VBO (first I will try to render basic texture-less polys, then advance from there).

I had to use Glew though, otherwise I couldn't compile the new OpenGL driver with the VBO calls (which I guess it's normal), and now I am reading into which data structures they actually expect. I will probably post some code snippets afterwards if I manage to put it working the way I want to (which also may take a while, this stuff is tricky lol ).

).

EDIT: Well, I think I got the hang of how VBOs work, so I already have a Nali being rendered (badly lol), with some heavy bugs (mainly because for testing purposes I am initializing the vbo, initializing glew, building the whole thing (some dummy data in between relative normals and uvs), render, and then destroy it, which makes it very slow and buggy, at least in my virtual machine lol), but so far it seems that VBOs can be implemented in UT/OpenGL, and Glew works well enough.

Extending from URenderBase was my first approach (the same as yours, in fact), then I tried to extend from URender (to intercept the calls). Although I did it successfully to an extent at a certain point (after: fixing the RenderPrivate.h, manually generating a new Render.lib file from the Render.dll and finally adding a few bytes (byte array member) to my render so the class size matched that of URender to pass through its first assert without crashing), given that the function calls were still the way you describe and the calls were still made in URender, my version ended up working pretty much like an extended URenderBase in the end, in other words, useless lol

So I figured that this wasn't certainly the way to go, even more so since if I managed to successfully somewhat hack a bit further into it and hook into the function calls to make certain things possible, I would probably end up being in trouble with Epic lol, so it was definitely a no-no situation.

Thus I went back to the first approach (the same as yours once again), and currently I am doing the same: extending from URenderBase, having my render as the main one, load the standard one and forward the function calls to it. From there, I have the whole thing structured in my own way.

And I won't try to intercept the render calls of the meshes directly, I will just add a new way to render them instead (an extension if you will), although that way a few things are going to be tricky (mostly for online play, due to the way some vars are defined for replication), but is probably better this way (at least for me).

But I am still doing small baby steps though, so it will take a while.

I already managed to render a mesh in wireframe in my own way in OpenGL though (which extends my own custom render device with custom calls), with the right rotation, proportions and everything, mostly to check if I was in the right direction in my interpretation of the mesh data (I had a problem in the beginning though: it crashed UEd, then I figured that UEd is really "lazy" in loading "lazy arrays" lol, so after forcing the load up of certain things for testing, problems fixed and I am able now to see it in UEd as well), and currently I am in the process of trying to render it as a VBO (first I will try to render basic texture-less polys, then advance from there).

I had to use Glew though, otherwise I couldn't compile the new OpenGL driver with the VBO calls (which I guess it's normal), and now I am reading into which data structures they actually expect. I will probably post some code snippets afterwards if I manage to put it working the way I want to (which also may take a while, this stuff is tricky lol

EDIT: Well, I think I got the hang of how VBOs work, so I already have a Nali being rendered (badly lol), with some heavy bugs (mainly because for testing purposes I am initializing the vbo, initializing glew, building the whole thing (some dummy data in between relative normals and uvs), render, and then destroy it, which makes it very slow and buggy, at least in my virtual machine lol), but so far it seems that VBOs can be implemented in UT/OpenGL, and Glew works well enough.

- Shadow

- Masterful

- Posts: 743

- Joined: Tue Jan 29, 2008 12:00 am

- Personal rank: Mad Carpenter

- Location: Germany

- Contact:

Re: [INFO] Development Journal

Current (preliminary) Change log for September Release

Rendering / Visuals

- added: new Texture Blending Equation Options, you may now change how the blend equation is set, supported Modes:

- added: Support for new DrawTypes, new DrawTypes include (subclassing sdkActor.uc!):

- fixed: rewritten 100 % code of the new Texture Blending Modes

- fixed: crash when setting bParticles=True with no Texture set (old Bug Epic Games never fixed)

Particle Engine

- added: new Emitter Class

- updated: improved performance in Wireframe Mode

Post Processing

- fixed: non working Texture Blending

System Functionality / Packages

- added: version build Info for graphics drivers (on request)

- added: new Static Mesh Package 'ShowcaseStatic'

- updated: improved Linked List management

UnrealED / Unreal Script / C++

- added: new Bounding Volume features /classes

- added: Light Pool Support

Gameplay & Level Design

- updated: highly improved performance in the Showcase Maps

Menus / Options

- added: long awaited full Options Menu

Rendering / Visuals

- added: new Texture Blending Equation Options, you may now change how the blend equation is set, supported Modes:

- BE_Add (Standard)

- BE_Subtract

- BE_Reverse Subtract

- BE_Min, BE_Max

- all supported by Sprites, Particle Engine, Canvas, Post Processing, BSP.. hopefully Meshes soon

- added: Support for new DrawTypes, new DrawTypes include (subclassing sdkActor.uc!):

- DT_AdvSprite

- DT_AdvMesh

- DT_StaticMesh -

- to make at least DT_AdvMesh work on old Actors use: OLD DT_RobeSprite (mainly for Material Support on Weapons for the upcoming UT Remodelling Project - UTRP)

- improved skin management (internal)

- improved memory management (internal)

- highly improved Wireframe Rendering Performance

- highly improved Poly/Lit Rendering Performance

- build-in Support for Blocking Volume Collision (so you don't need to add an extra Blocking Volume Actor)

- Sub/Multi Texture Support (Texture Layers, Detail Textures etc.)

- Light Shading, Texture Blending and U/V Mapping can be applied per Skin rather than globally by the main options

- switch between Wireframe and Polygon Rendering (in Editor and in Game)

- multiple conditions how a Static Mesh receives Light (within Radius, Zone, Volume, LineCheck, single Actor etc.), may improve performance

- Static Mesh LOD Support, works by using different versions of a mesh depending on distance (max. 4 different versions)

- VisiblePoly and LitPoly Reduction Option (to hide polys that are not visible)

- Polys are displayed according to current view mode in Editor: Texture Use (shows difference of skins on Static Mesh), Textured (shows simple skinning of Static Mesh), Dynamic Light (shows illuminated Static Mesh), Zone/Portal (shows Static Mesh in color of current Zone), Light Map only

- Support for Mirror about X/Y/Z Axis (like Brushes)

- Support for mirrored U/V Coordinates

- minor new Light Options like LightIntensity, LightColorIntensity, showing which Light is relevant (pointing an arrow on it), current Light Count, showing LightMap only etc.

- Support for per-Particle Lighting (warning: don't use extensively!)

- Material Support (Texture Layers, Detail Textures etc.) → DT_AdvMesh

- Lensflare, DirectionalCorona and DirectionalLensflare revamped and working

- formally known as Vertex Sprites

- recoded and unified all volume sprite actors into Volume Corona and Volume Sprite

- updated them with newer code from the other basic sprite system

- messed up / displaced wireframe rendering of Static Meshes in 2D view

- performance drop when viewing Static Meshes in 2D view or 3D wireframe view

- „ran out of virtual memory“ error removed

- critical error when duplicating Static Meshes

- critical error when changing mesh

- Static Mesh disappearing when deleting Emitters or other linked-List Actors

- not working new Texture Blending

- false z-discplament of static mesh when rendering standard 3D Meshes too (Static Mesh was rendered before these meshes)

- not working visibility / performance checks

- fixed: rewritten 100 % code of the new Texture Blending Modes

- Blending Modes are not merged together anymore using same PolyFlags (OpenGLDrv.cpp)

- allows for full support of mixing all new blend modes being displayed at the same time (!)

- fixed: crash when setting bParticles=True with no Texture set (old Bug Epic Games never fixed)

Particle Engine

- added: new Emitter Class

- Volume Sprite Emitter - renders a bunch of sub sprites at the vertices of a given mesh as particle, size of mesh and size of sub sprites can be changed indipendently, the range of used vertices can be changed, supports 3D scale and fixed animation frame of mesh

- changeable render order of particles (newest first, oldest first)

- changeable render order of coronas (before or after particle)

- standard 32-bit hue/brightness/saturation color lighting per-Particle basis

- supported by Static Meshes (hope to get support for BSP in further releases)

- updated: improved performance in Wireframe Mode

Post Processing

- fixed: non working Texture Blending

System Functionality / Packages

- added: version build Info for graphics drivers (on request)

- added: new Static Mesh Package 'ShowcaseStatic'

- updated: improved Linked List management

- unified the use of linked lists for all subclasses of sdkActor (only uses one list now)

- now it can be filtered which linked list actors are refreshed during rebuild (RefreshClassesList)

UnrealED / Unreal Script / C++

- added: new Bounding Volume features /classes

- option to render volume during gameplay

- full support for Pawns/AI and Players

- particle support (numerous options to check collision with particles)

- fadein/fadeouttime for each post process material individually

- new particle related volumes: ParticleVolume, EmitterVolume, EmitterPhysicsVolume, ParticleWarpVolume..

- added: Light Pool Support

- SDKLevelInfo has a list of all lights in the level

- critical actors like Static Meshes now iterate only through THAT list, instead of iterating through all actors,

now these actors take only relevant lights into their calculations - speeds up overall static mesh lighting calculation

Gameplay & Level Design

- updated: highly improved performance in the Showcase Maps

- about 60 % performance increase in the Material, Items and Particle Showcase Maps

- about 50 % performance increase in the Start Map, about 50 % decrease in filesize

Menus / Options

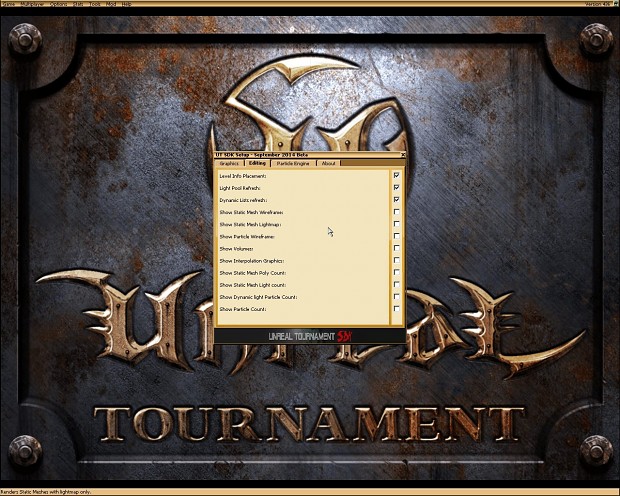

- added: long awaited full Options Menu

- config the SDK the way you need

- categories include: General, Editing, About, Graphics, FX, Gameplay, Particle Engine

Re: [INFO] Development Journal

Really GREAT work, Im truly impressed. Keep it up

- Shadow

- Masterful

- Posts: 743

- Joined: Tue Jan 29, 2008 12:00 am

- Personal rank: Mad Carpenter

- Location: Germany

- Contact:

Re: [INFO] Development Journal

Thanks Higor and Radi for your anticipation. Higor I FINALLY managed to write you back.

Well guys the development is going well so far, having nearly finished the new options menus I now make these options work in the system, clean up the render, going through all relevant classes, messing around with some mesh code (for the UT Remodelling Project).. and some delicate surprises xD

Well.. let's say: f*ck the old mover class!

Well guys the development is going well so far, having nearly finished the new options menus I now make these options work in the system, clean up the render, going through all relevant classes, messing around with some mesh code (for the UT Remodelling Project).. and some delicate surprises xD

Well.. let's say: f*ck the old mover class!